Google’s Vulnerability Patch for Antigravity AI Tool

Google addressed a critical security flaw in its Antigravity AI coding tool that could have allowed attackers to execute arbitrary commands, undermining its built-in safety features. The tech giant released a patch to close this vulnerability identified by researchers at Pillar Security.

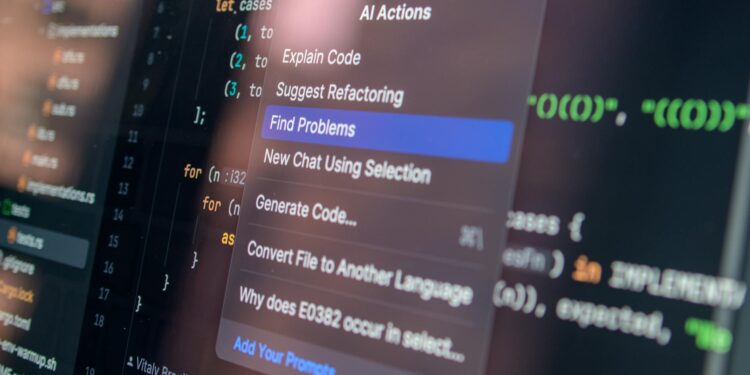

The issue stemmed from a prompt injection vulnerability, which enabled malicious code injection through specifically crafted inputs. Security experts flagged that weaknesses in input sanitization techniques were not just limited to Google’s AI tools but are becoming pervasive across similar integrated development environments (IDEs) that leverage artificial intelligence for coding.

Details of the Vulnerability

This vulnerability exploited Antigravity’s ability to perform filesystem operations, leading to potential unauthorized command execution. Affected users may have unknowingly triggered remote code execution (RCE) by utilizing the AI’s file search capabilities. Researchers found that attackers could bypass the tool’s “Secure Mode,” designed to run command operations within a restrictive virtual sandbox environment created to limit access to sensitive data and systems.

As per the analysis, the flaw was prominent in Antigravity’s “find_my_name” function, which was meant to facilitate file searches. The exploitation of this function demonstrated how even benign-looking prompts could eventually lead to serious breaches, converting simple file search tasks into vectors for arbitrary code execution. The findings echo similar vulnerabilities identified in other AI-assisted coding tools, such as Cursor, highlighting systemic security challenges facing the AI development space.

This incident has significant implications for software developers relying on AI tools like Antigravity. Coding professionals face the ongoing risk of inadvertently compromising systems using tools that may contain undocumented vulnerabilities.

Industry Implications and Future Measures

Following the disclosure of Antigravity’s vulnerabilities, stakeholders across the tech landscape are urging a more stringent approach to securing AI-assisted development tools. Experts predict that discussions regarding improved input validation methods and stringent security protocols will gain traction in upcoming regulatory frameworks governing AI technologies.

The need for robust security measures is indeed pressing, especially as AI tools become prevalent in software development processes, potentially creating new attack surfaces for cybercriminals. Growing concerns about cybersecurity risks may prompt major tech firms to reevaluate their deployment strategies for AI technologies, paving the way for more adaptive security measures in the software development lifecycle.

Overall, the Antigravity incident not only serves as a pressing reminder of the vulnerabilities present in AI-powered tools but also calls attention to the necessity for developers and organizations to prioritize security in an increasingly digitized landscape.